AI NOC, Not AI-on-NOC: Aviz Flips the Stack

Thomas Scheibe walked on stage and said the quiet part out loud. Most “AI for networking” pitches you’ve sat through this year work the same way: vendor has a controller, they bolt-on an LLM and call it agentic. Thomas’s exact words were that this is “10% of the problem”… that’s a generous read.

Aviz Networks presented the delgates at NFD40 with the inverse pitch. Start from what the operator is actually trying to do, then figure out which data, tools, and agent workflows you need to do it. The LLM is just a component, not the entire product. They call it the AI NOC, and Cody McCain spent the back half of the session demoing it against a deliberately mismatched lab full of Cisco, Arista, Dell/SONiC, Fortinet, and Palo Alto gear.

If you don’t know Aviz, their elevator pitch is “Red Hat for SONiC.” They are a networking software company, built from the ground up to be multi-vendor. They operate in five countries, with 100+ employees, and they pick up the phone when your SONiC box does something weird at 3am. They ship a SONiC controller (ONES), an observability product, NVIDIA AI Factory integrations, and of course Network Copilot, the topic of their presentation.

Why “AI NOC” instead of “LLM-on-controller”

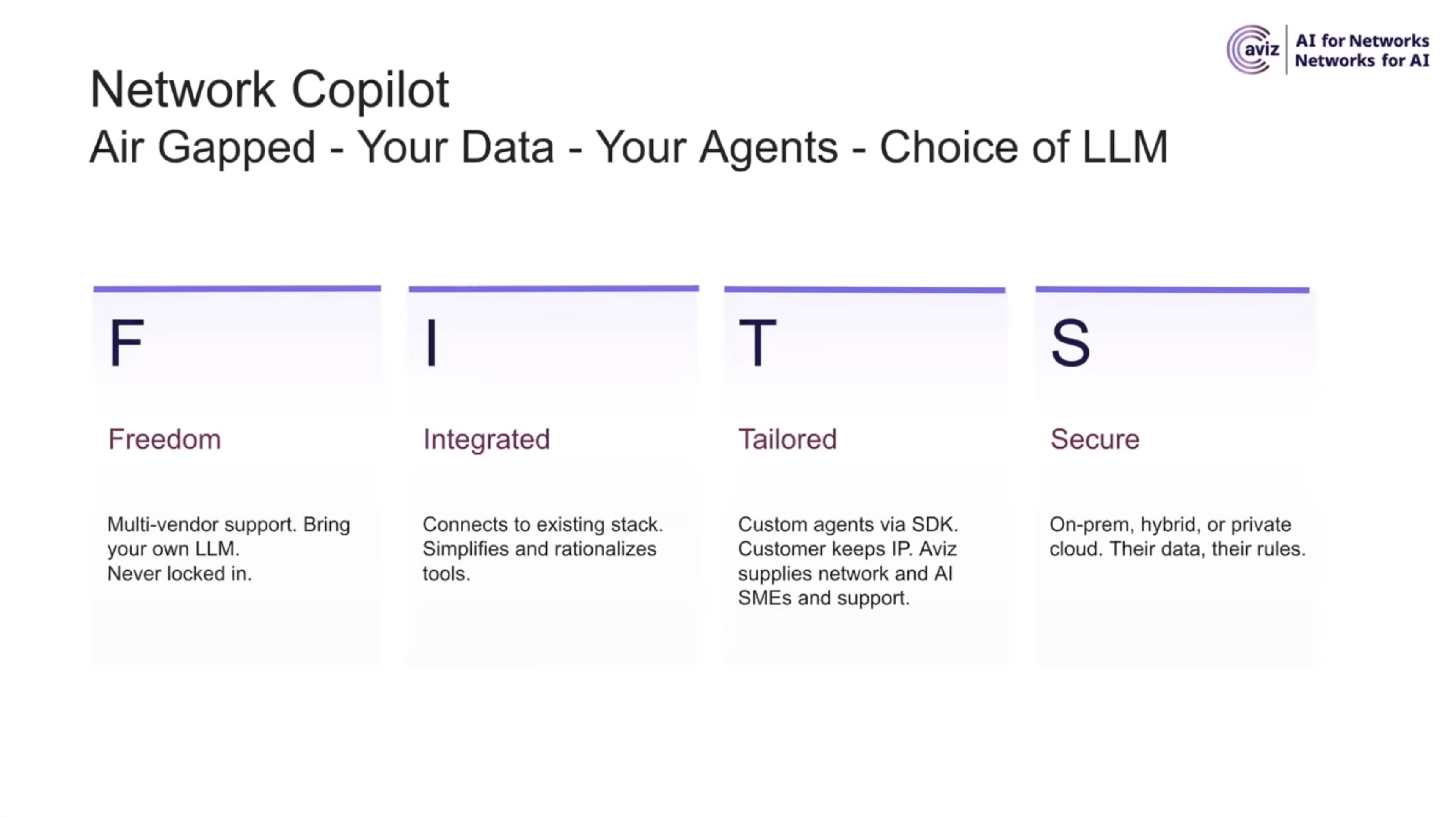

Choice of LLM. Network Copilot ships today with GPT-OSS 120B running locally. Yesterday it was Llama 3 70B. Tomorrow it will be whatever’s on Hugging Face that week. They started this product with a packaged open-source model because that’s the path of least resistance, then immediately ran into customers saying “we already have an approved Gemini instance, point at that.” So they did. Bring your own model, packaged or remote, behind your own guardrails.

Integration with what you already have. This is the multi-vendor claim, and it’s the one that matters. Aviz doesn’t own the controller, doesn’t own the switch, doesn’t own the ticketing system. They built data connectors (REST, MCP, where the vendor exposes one) for ServiceNow, netbox, controllers from various vendors, firewalls, flow data, CMDBs, and the rest. The agent platform is the layer that ties them together. Cody made the point that as more tools ship MCP endpoints, that integration story gets cheaper for Aviz over time, because the LLM negotiates capabilities directly with the endpoint instead of waiting for Aviz to ship a connector update.

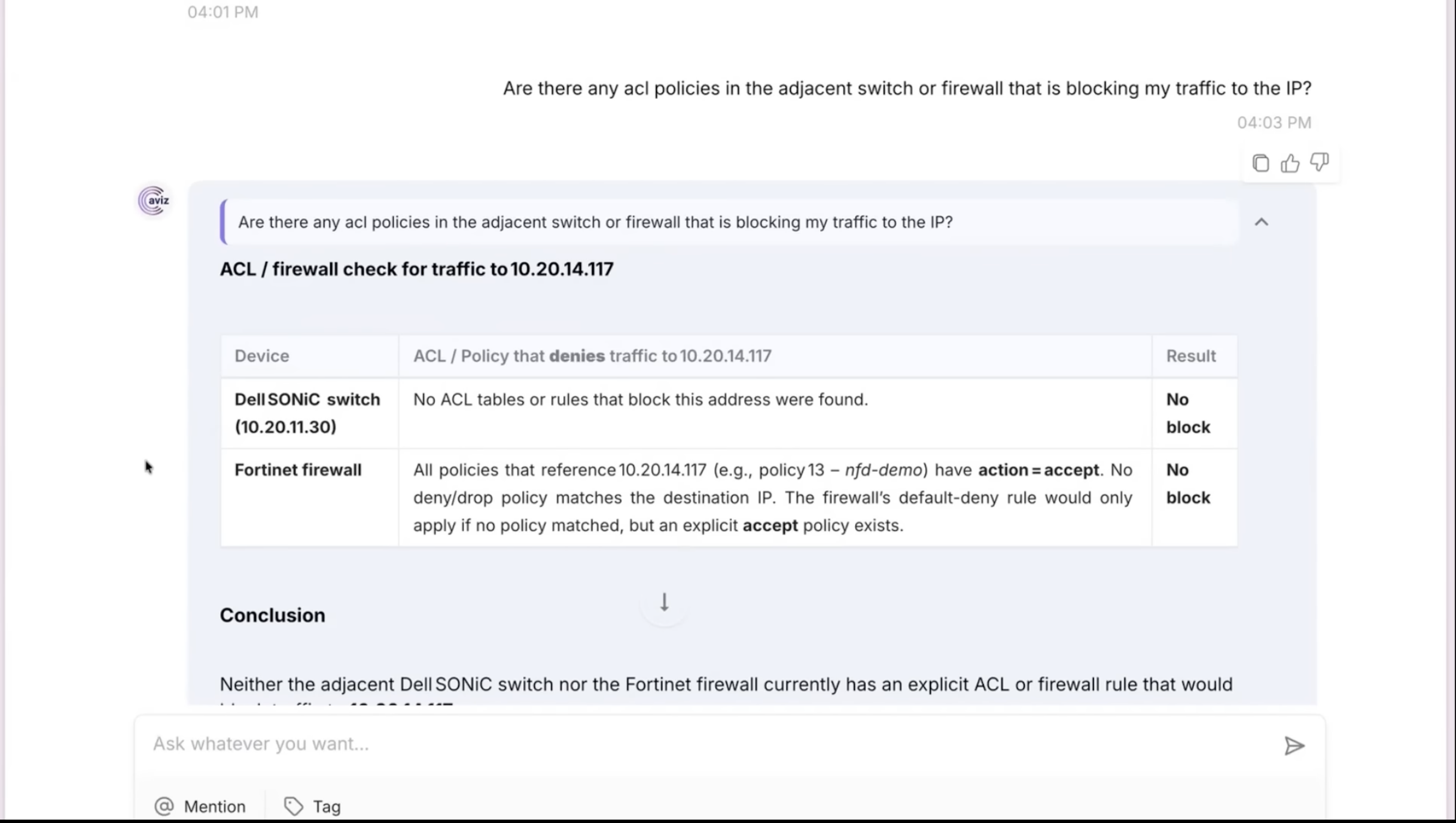

Customization through the SDK. This is the part most vendors gloss over. The out-of-the-box agents handle the obvious cases: “tell me about ticket 18”, “check the ACLs on that switch”, “are there firewall rules blocking this destination.” The actual value, per Cody, comes from your team writing custom agents that encode your change management process, your risk tolerance, your runbooks, on top of the network domain knowledge Aviz already shipped. They have a Python SDK for it. The demo included a custom firewall RCA agent uploaded specifically for the session.

Security as a precondition. This is where the AI NOC framing earns its keep. Cody said it cleanly: “demos are easy on a laptop, production needs guardrails.” The product ships with role-based access control over devices, data connectors, files, LLMs, and custom agents as separate resource classes. Hierarchical agent delegation with one designated decision-maker, no autonomous agents fighting for control. Human-in-the-loop gates configurable per change class. Run the front end on your server, run the GPU endpoint on your dedicated lease, keep the data on prem. None of this is novel. All of it is missing from most of the demos you’ve seen this year.

Demo was a Trojan horse for the actual story

The on-stage demo opens with a ServiceNow ticket: “I can’t reach my server at 10.x.x.x.” Pure end-user fingerpointing. The agent ingests the ticket, extracts the IP from the description, identifies the adjacent switch, queries it for ACLs (none), then walks upstream to the Fortinet and finds nothing blocking. Mean time to innocence achieved. Cody then changes a firewall rule live to deny that traffic, asks the agent “can you check the firewall again” with no other context, and the agent, holding the conversation history, finds the new deny rule and reports it. A second demo adds a VLAN to a switch using natural language, no CLI semantics, agent figures out which device from context.

This is fine. It’s a competent demo… it’s also not the interesting part.

The interesting part showed up when Tom Hollingsworth asked about installations where the inventory data is a disaster. Half the devices not in netbox, the other half in netbox with wrong information, three different sources of truth that all disagree. Standard enterprise reality, especially in shops without dedicated NetOps tooling budgets.

Cody’s answer, and Thomas’s emphatic agreement, was that this is the highest-value early use of an agentic platform. Not RCA. Not the chat-driven config change. Data hygiene. Point the agent at all your inventory sources, ask it where they disagree, ask it which records are missing where, ask it which tools you can probably retire. Thomas: “if you have multiple different databases… this is an easy way to do data hygiene.” He went further and called it the number-one use case he’d recommend for most customers they’re engaging with.

That’s the under-told story. The RCA demo is what you put on the slide because it’s flashy. The data reconciliation use case is what actually pays for the product in year one, before anyone trusts the agent enough to let it touch a config.

The audience pushback during the session was almost entirely about trust. Can it do subnet math? What if two agents disagree? How do I keep it from hallucinating? Can I make it ask permission before changing things? Can I personalize its behavior per user?

Cody’s answers all landed in roughly the same place: yes, but the point of the platform is that you decide where the rails are. The hierarchical agent model has one decision-maker by design. The LLM gets schema-constrained data and instructions about what a valid answer looks like, so an answer about a device with an IP that doesn’t exist in inventory is flagged as wrong. RBAC gates which agents can talk to which resources, so your VLAN-add agent might not have any device access for a given user. Guardrails on tools, not just on prompts, because LLMs treat instructions and data as the same bucket and can be talked out of in-prompt rules.

Tom Hollingsworth had the line of the session, and it’s the right frame for all of this: treat the agents like interns. Give them the right environment, the right controls, the right guardrails. Not glamorous. But that’s how you build trust toward more autonomous behavior, one workflow at a time.

This is the part of the AI-for-networking conversation that most vendors are skipping. The demo where the LLM answers a question is the new baseline. The demo where the LLM is constrained, audited, scoped, and integrated cleanly into a real change management process is the one that ships to production.

What you give up

The Aviz model has a real trade-off, and it deserves to be named honestly. When you buy an integrated vendor’s AI feature, you also buy the integration work they did. The connectors are tested, the schemas match, the agent knows what your controller is going to return. When you buy a multi-vendor platform like Network Copilot, you inherit the integrator’s burden: you’re now the one stitching together SONiC support, ServiceNow, netbox, your controller of choice, and an LLM, and the value of the system depends substantially on the quality of the agents your team writes on top of the SDK.

For shops with engineering capacity and a multi-vendor reality (which is most of them, regardless of what their preferred vendor’s slide deck says), that’s a feature, not a bug. For shops looking to buy a turnkey AI Ops button, this isn’t the product.

Aviz isn’t pretending otherwise. Thomas was clear on stage that the operators they’re talking to don’t want a tool that decides their workflow for them. They want a platform that runs their workflow. Different bet, different customer.

Where to start

If you’ve been watching the AI-in-networking space and getting tired of demos that all look the same, the Aviz session is the one to go back and watch. The demo work is solid. The architectural argument behind it is the interesting part, and Thomas and Cody were both willing to engage substantively with the audience instead of staying on script. They have a sandbox, they’ll set you up with a demo environment if you ask, and the SDK is the thing to look at if you want to understand how this is supposed to work in your environment specifically.

The data hygiene use case is the one I’d pilot first.

Disclosure: I attended Networking Field Day 40 as a delegate. My travel and accommodations were covered, but I was not compensated for this post and the opinions are my own.

Links & Resources

Other Delegate Posts

- NFD40: Aviz Manages SONiC-Based AI Datacenters - Peter Welcher, LinkedIn